|

10/5/2023 0 Comments Simply fortran cuda supportBut while CUDA C declares variables that reside in device memory in a conventional manner and uses CUDA-specific routines to allocate data on the GPU and transfer data between the CPU and GPU, CUDA Fortran uses the device variable attribute to indicate which data reside in device memory and uses conventional means to allocate and transfer data. As with CUDA C, the host and device in CUDA Fortran have separate memory spaces, both of which are managed from host code. The real arrays x and y are the host arrays, declared in the typical fashion, and the x_d and y_d arrays are device arrays declared with the device variable attribute. In the variable declaration section of the code, two sets of arrays are defined: real :: x(N), y(N), a The first is the user-defined module mathOps which contains the saxpy kernel, and the second is the cudafor module which contains the CUDA Fortran definitions. The program testSaxpy uses two modules: use mathOps

Let’s begin our discussion of this program with the host code.

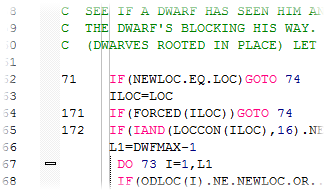

The module mathOps above contains the subroutine saxpy, which is the kernel that is performed on the GPU, and the program testSaxpy is the host code. I = blockDim%x * (blockIdx%x - 1) + threadIdx%x The complete SAXPY code is: module mathOpsĪttributes(global) subroutine saxpy(x, y, a) In this post I want to dissect a similar version of SAXPY, explaining in detail what is done and why. SAXPY stands for “Single-precision A*X Plus Y”, and is a good “hello world” example for parallel computation. In a recent post, Mark Harris illustrated Six Ways to SAXPY, which includes a CUDA Fortran version. Keeping this sequence of operations in mind, let’s look at a CUDA Fortran example.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed